A $15 kit from the dark web now fools most bank identity systems. Detection is not keeping up.

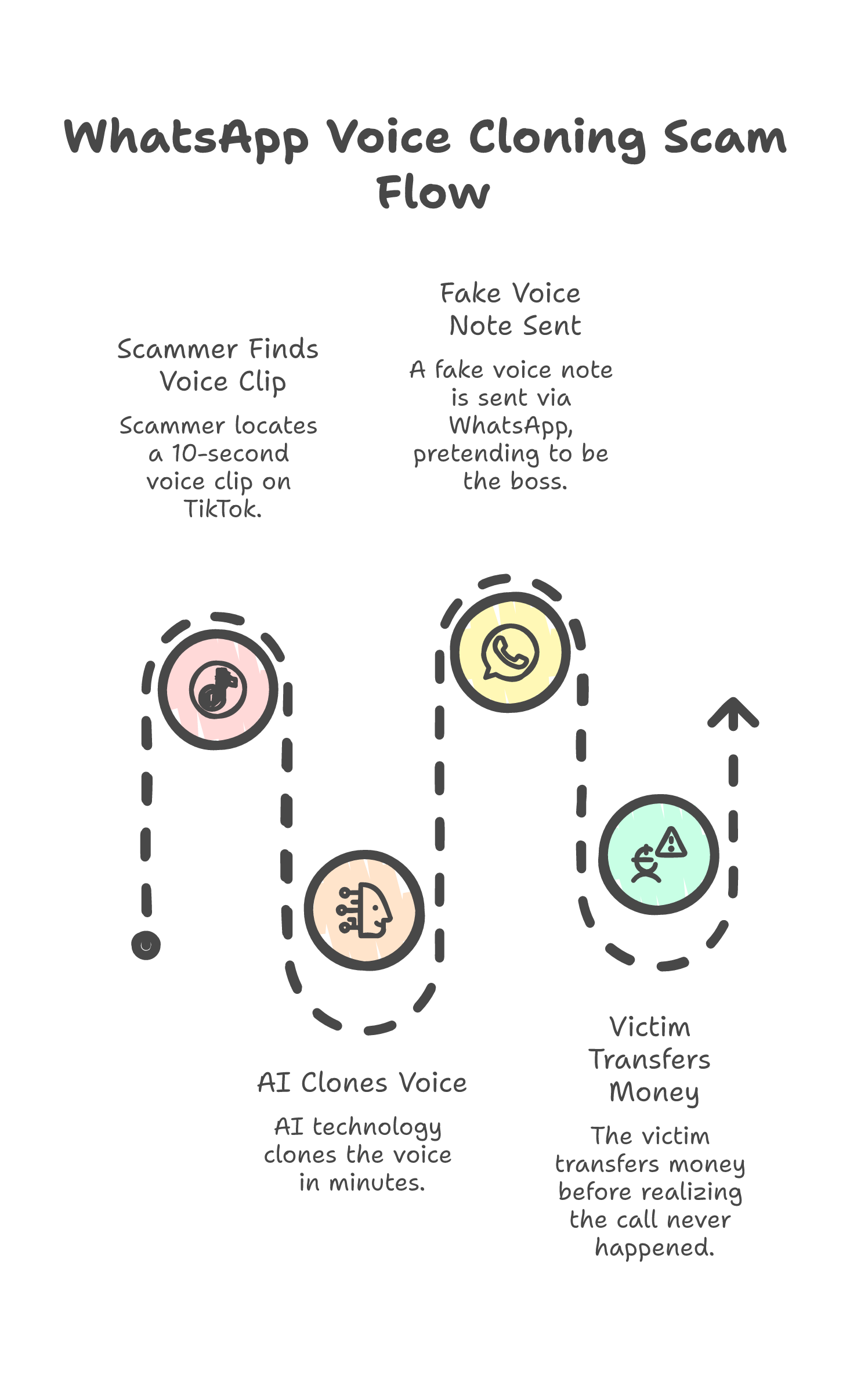

The call

A woman got a WhatsApp voice note from her boss telling her to transfer funds urgently. The voice was cloned from a 10-second TikTok clip. She sent RM5,000 before realizing the call never happened.

That is one case. In Southeast Asia alone, MCMC and local enforcement agencies logged 13,956 AI-driven scam cases in a single year, totaling RM2.11 billion in losses. Deepfake videos of heads of state and business leaders convinced 85% of viewers to act on fraudulent investment pitches, according to a 2024 UNODC report on cyber fraud in the region.

This is not a Southeast Asian problem. It is a structural one. The economics of synthetic media crossed a threshold that affects every system that relies on seeing or hearing something to believe it.

I build AI agent systems for a living. A core part of the job is making sure agents do not act on inputs they cannot verify. The deepfake problem is that same challenge, scaled to every person with a phone.

The numbers

Deepfake fraud across Asia-Pacific surged 1,530% between 2022 and 2023, according to Sumsub's 2023 Identity Fraud Report. That is not a typo. One thousand five hundred thirty percent in twelve months.

On the dark web, KYC bypass kits sell for $15. For that price you get a synthetic identity package that fools liveness detection, selfie verification, and document checks at most financial institutions. Gartner reported injection attacks against biometric systems rose 783% in 2024. What used to require specialized skills now requires half an hour and a credit card.

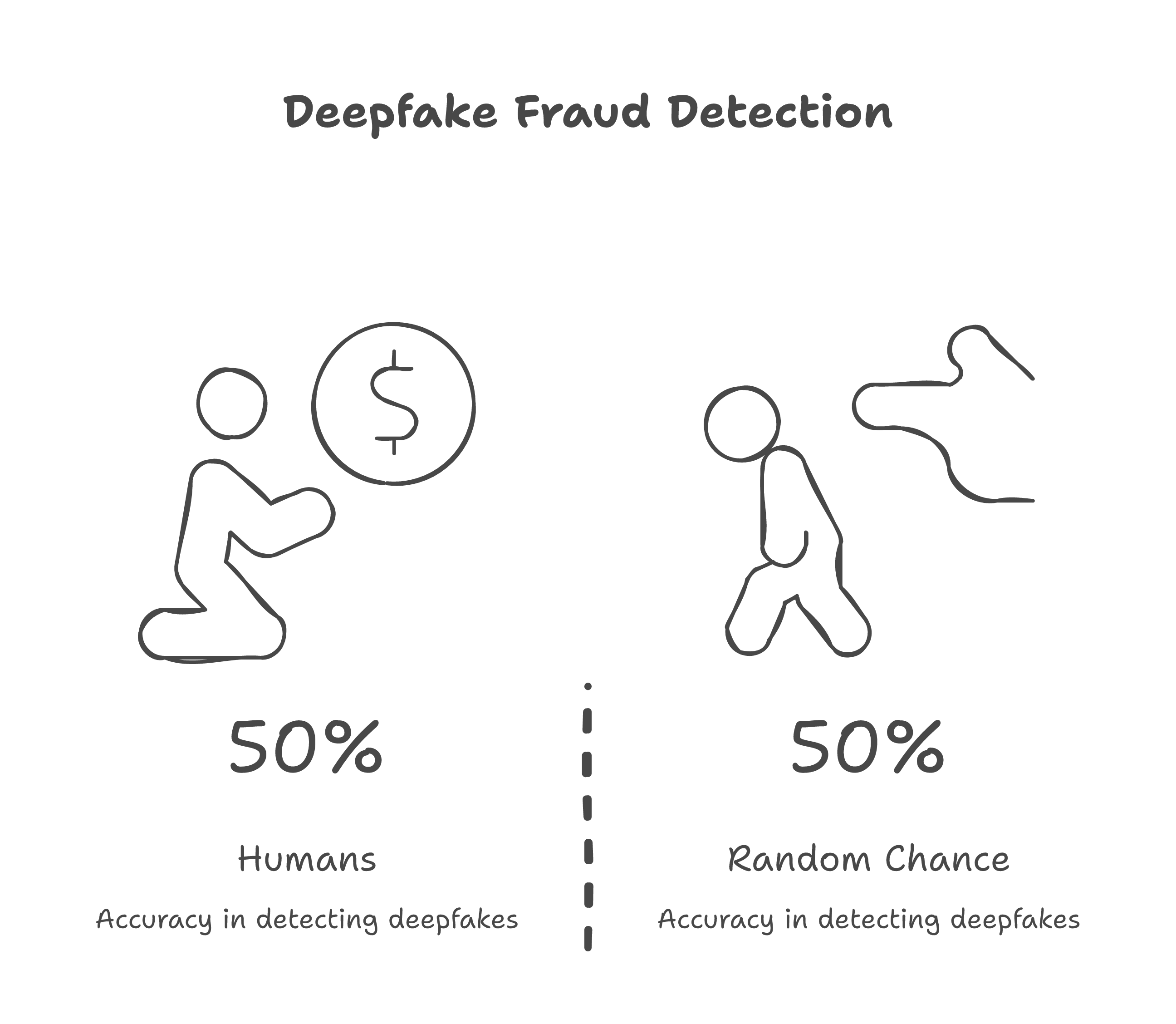

On the detection side, the picture is worse than most people assume. A 2024 study from University College London found that humans identify high-quality deepfakes at rates barely above random chance. A coin flip. And the counterintuitive part: prior exposure to deepfakes does not make people more skeptical. It makes them more susceptible. The brain normalizes the pattern. Familiarity breeds belief, not doubt.

85% of enterprises now report facing deepfake attacks, per Regula's 2024 survey. Global cost of AI-enabled fraud is in the tens of billions and climbing. The generation tools get cheaper every quarter. The detection tools do not.

Why detection is a losing game

The instinct when faced with synthetic media is to build better detectors. Train a model to spot artifacts, inconsistencies, unnatural facial movements. Ship it as an app, an API, a browser extension.

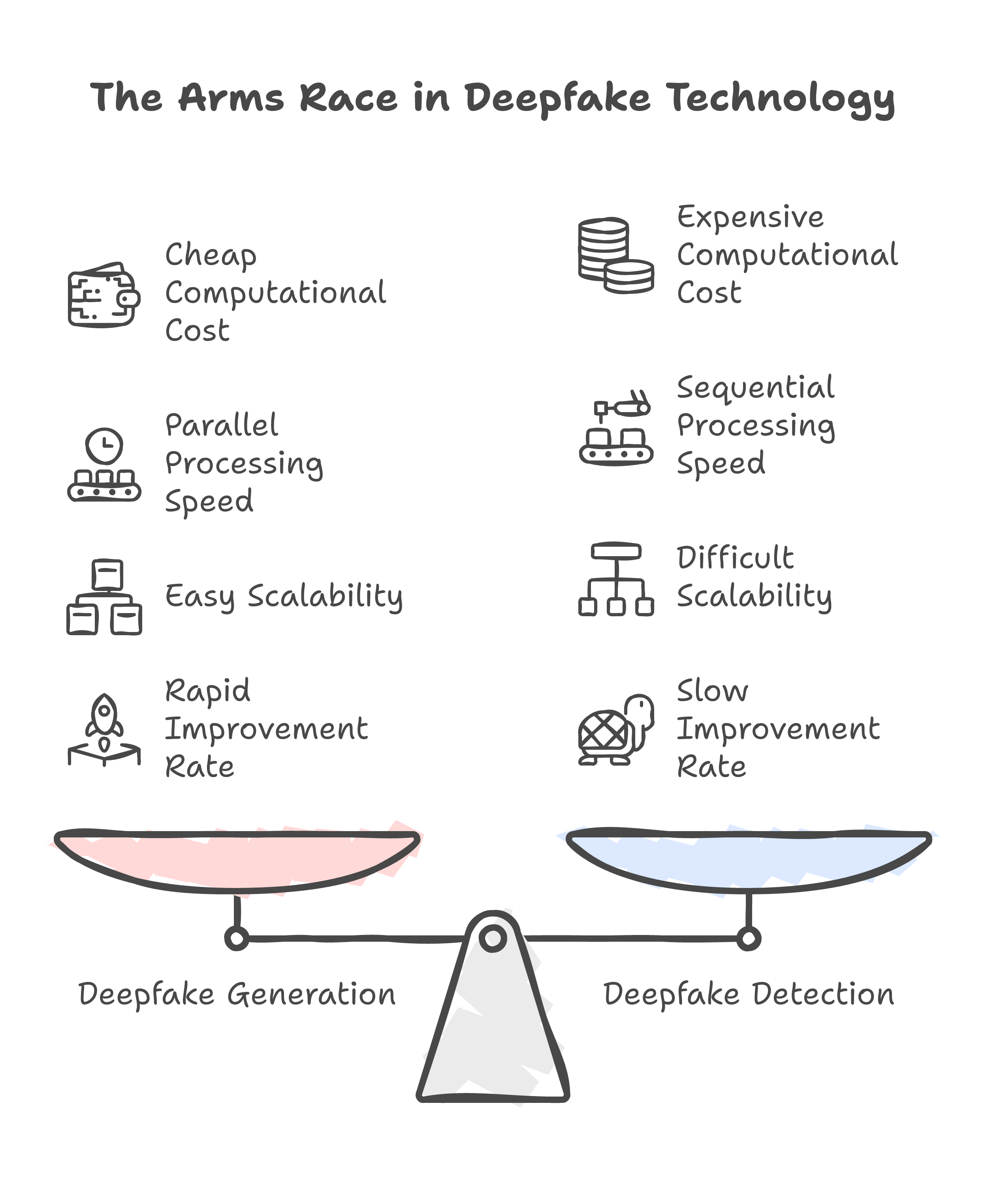

This is the same strategy the antivirus industry ran against polymorphic malware in the early 2000s. Signature-based detection worked until it did not. Malware authors had access to the same virus databases. They tested payloads against every major scanner before releasing them. Detection became a treadmill where defenders stayed one update behind.

Deepfakes follow the same dynamic. The teams building generators have full access to published detection research. They train against it. A detection model published in January gets folded into the generation pipeline by March. Every paper that improves detection simultaneously improves evasion.

The math makes it worse. Generating a deepfake is a single forward pass through a neural network. Detecting one requires analyzing multiple signals -- facial landmarks, audio spectrograms, compression artifacts, temporal consistency -- and making a probabilistic judgment. Generation is cheap and parallel. Detection is expensive and sequential. At internet scale, that asymmetry kills you.

Malaysia's Ministry of Digital is developing a deepfake verification app with Universiti Kebangsaan Malaysia. Reasonable project. But think about it practically: you cannot ask every person who gets a WhatsApp voice note to run it through a verification app before deciding whether to trust it. That is the ceiling of any detection strategy.

What is replacing detection

Forget detection for a moment. Look at what is actually shipping across jurisdictions right now.

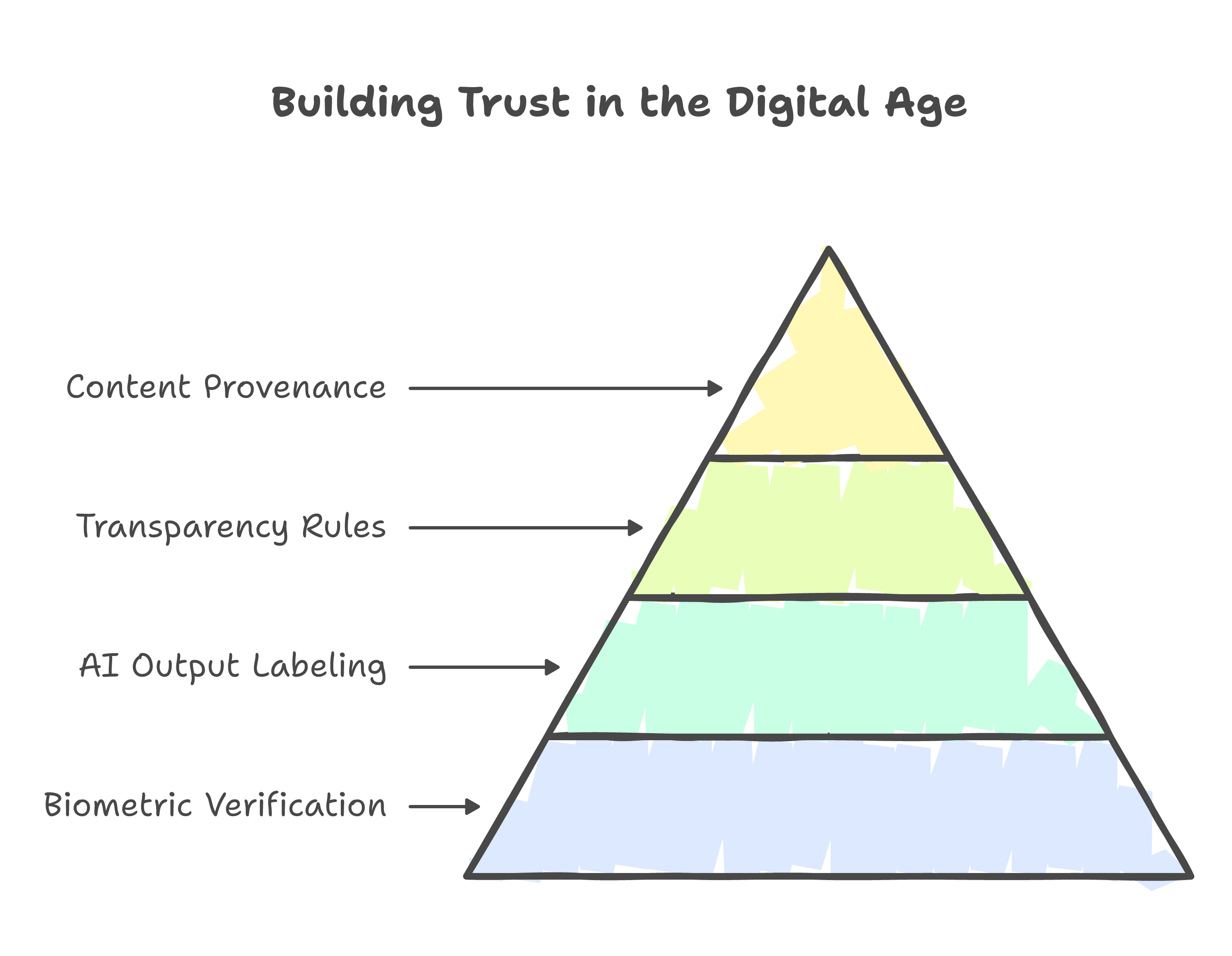

Vietnam mandated biometric verification for all bank account openings. Not optional. Required. Every new account, every payment card, verified against a national biometric database.

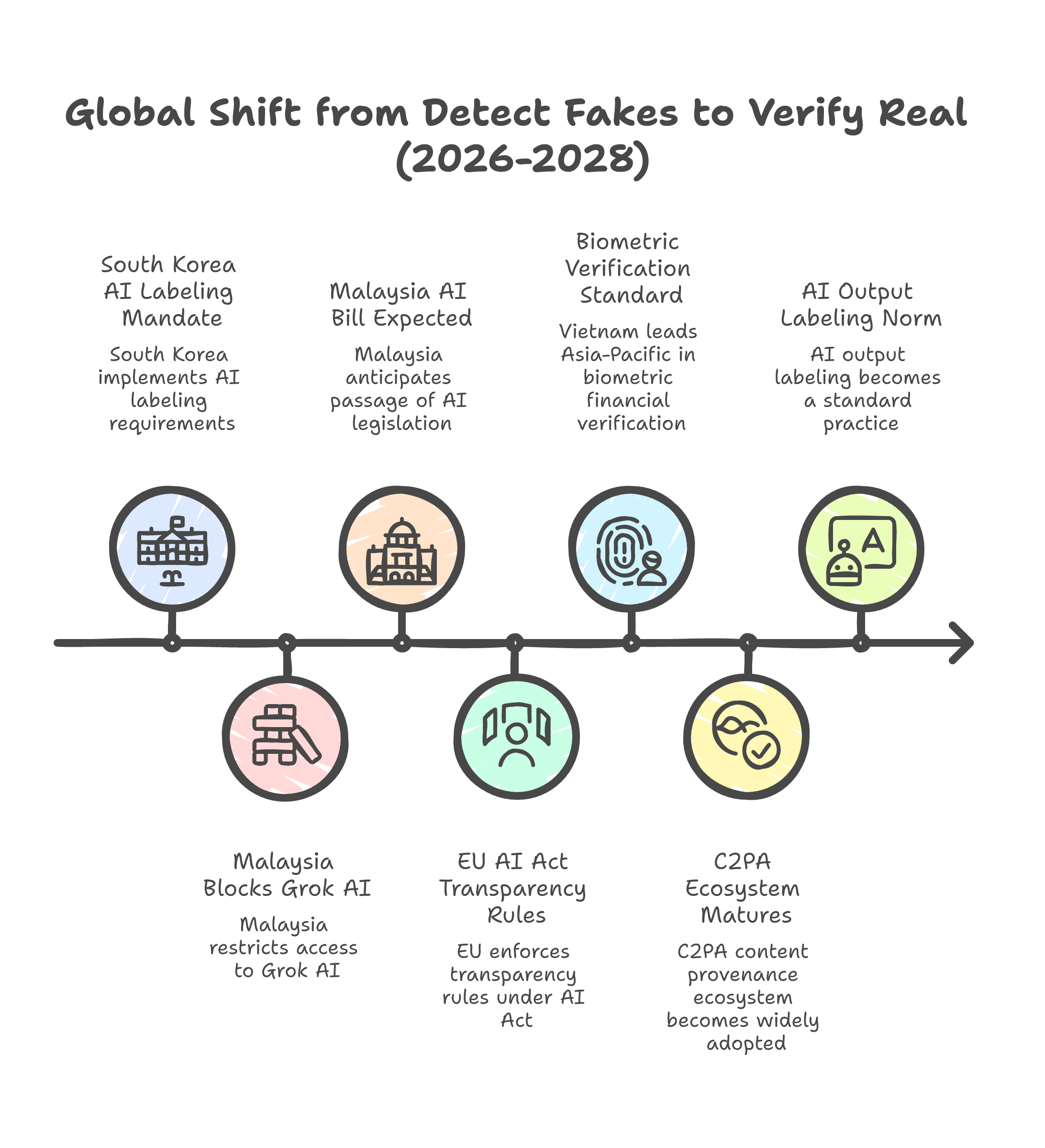

South Korea mandated labeling of all AI-generated output starting January 2026. If software created it, it must be marked. The burden is on the creator, not the viewer.

The European Union enforces its AI Act transparency rules in August 2026. Providers must mark AI-generated and manipulated content in machine-readable formats. Deepfake identification becomes a legal obligation.

The C2PA coalition -- Microsoft, Adobe, Google, and others -- now has thousands of members building content provenance infrastructure. Cryptographic signing at the point of creation. Watermarking that survives screenshots and re-uploads. Metadata chains that trace content from camera to publication.

Notice what these have in common. None of them are asking "is this fake?" They are all asking a different question: "can this be proven real?"

That is a different security model entirely. Detection is reactive -- you examine content after creation and guess. Provenance is proactive -- you establish authenticity at the point of origin. Detection scales with the volume of fakes. Provenance scales with the volume of legitimate content. Only one of those curves is survivable.

The shift

Here is the mental model change in one line.

Old question: "Can I spot the fake?" New question: "Can the real prove itself?"

When generating a convincing fake costs $15 and thirty minutes, the burden of proof has to move. It cannot sit with the viewer. It has to sit with the content and the systems that produce and distribute it.

In practice, that means identity verification goes biometric as baseline, not as an upgrade. Content provenance becomes infrastructure -- C2PA-style signing at the device level, not just the platform level. AI output labeling becomes law. South Korea and the EU are already there. And platform liability flips: if you distribute unlabeled synthetic content at scale, the cost lands on you, not the victim.

None of this eliminates deepfakes. Nothing will. But it changes the economics. Right now, faking is cheap and proving authenticity is hard. These measures flip that. Faking still works, but it works against systems designed to verify, not systems designed to trust by default.

Not every country is moving at this speed. Malaysia blocked Grok AI in January 2026 after xAI failed to implement safeguards against non-consensual deepfake generation. That was reactive -- necessary, but not systemic. The country's AI Bill is not expected until mid-2026 at the earliest. Across the region, the UNODC has documented transnational fraud factories that combine AI-generated content with underground banking networks. These are not lone actors. They are organizations with R&D budgets and operational playbooks. The gap between the sophistication of the attack and the maturity of the defense is widening.

But the direction is clear. Jurisdiction after jurisdiction is moving from "detect fakes" to "verify real." The speed varies. The direction does not.

Where this leaves you

Within the next 18-24 months, biometric verification for financial transactions will likely become standard across most of Asia-Pacific, following Vietnam. Content provenance requirements will expand beyond the EU as the C2PA tooling matures. AI output labeling will spread from a handful of early movers to a broadly adopted norm, because the alternative -- an internet where nothing can be trusted -- is not commercially viable for anyone.

If you are building AI products today, you should be designing for this world now. Not because regulation requires it yet, but because the trust infrastructure of the internet is being rebuilt, and the products that integrate provenance and verification early will have an advantage when the mandates arrive.

The detection arms race was worth running for a while. It bought time. But time is up.

Remember the woman with the WhatsApp voice note. No detection app would have saved her. What would have saved her is a system where the voice note had to prove it was real before she ever heard it. That is the system being built right now. The only question is whether you are building with it or waiting to be forced.

The only durable strategy is making the real verifiable, not making the fake detectable.